Updated May, 2026

Enterprise teams use only 30 percent of provisioned EBS capacity on average in 2026. It is common to end up in a situation where you launch an EC2 instance with Amazon EBS storage, only to discover your EBS storage allocation is significantly larger than required.

Yet, shrinking an EBS volume can be complex, demanding thorough preparations like creating backups and precise execution of reduction procedures.

Acknowledging the challenges of resizing an AWS EBS volume, our upcoming blog aims to provide a comprehensive guide. It will delve into EBS volumes, their significance in AWS, the necessity of shrinking them, and detailed steps for the reduction process.

Introduction To EBS Volumes & Their Importance In AWS

An Elastic Block Storage Volume (EBS Volume) is akin to a cloud-based hard drive, attached to instances, serving as an extension of EC2 storage. These volumes stack up to form file systems, presenting a flexible storage system for varied data sizes.

EBS volumes operate within an "availability zone," ensuring data attachment to EC2 instances and auto-duplication for enhanced security. However, exclusive data replicated within that zone is lost if a zone fails.

There are four types of EBS volumes, each classified into two categories - Solid State Drives and Hard Disk Drives. They are

- General Purpose SSD (GP2 and GP3): Suitable for diverse workloads like transactional workloads, web servers, medium-sized databases, and development environments, offering a balance of price and performance.

- Provisioned IOPS SSD (IO1 and IO2 Block Express): For I/O-intensive applications needing consistent high performance, like I/O-intensive database servers and low-latency storage apps.

- Throughput Optimized HDD (ST1): Tailored for high throughput with higher latency tolerance, fitting data warehouses, log processing, and backups.

- Cold HDD (SC1): Cost-effective for archiving, disaster recovery, and backup scenarios where performance isn't the primary concern.

Now that we know what EBS volume and its types are, let us talk about the various benefits that make it essential for AWS.

- Data availability: An EBS volume is designed to be highly available and durable. Due to their automatic replication within the same availability zone, they can prevent data loss due to hardware failures.

- Data persistence: Data is retained in EBS volumes even if the associated EC2 instance is terminated or stopped. This allows you to preserve your data throughout the instance lifecycle.

- Data encryption: Using EBS volumes provides additional layers of security by encrypting your data at rest. You can use AWS Key Management Service (KMS) to manage the encryption keys.

- Data security: Enhanced data security can be achieved by defining who can access and modify the data on the EBS volumes using AWS Identity and Access Management (IAM).

- Snapshots: Snapshots are incremental, cost-effective, and can be used to restore data or create new volumes. The EBS volume supports making point-in-time snapshots, which offer data protection and recovery capabilities.

- Flexibility: You can choose between various EBS volume types (General Purpose, Provisioned IOPS, and Cold HDD) based on your specific performance and cost requirements. EBS volumes can also be resized to accommodate changing storage needs without disrupting your instances.

However, some issues associated with EBS volume contribute to a higher AWS bill. Our process to automate shrinkage and expand storage resources involves conducting a comprehensive storage discovery.

Upon conducting storage assessments of multiple organizations leveraging cloud service, we discovered that there were three primary problems.

- Idle volumes: EBS idle volumes are not actively used or serve no purpose. For example, they could have been associated with an instance or application but are no longer needed.

Even though volumes are not actively used, they still incur costs while provisioning. AWS charges for the provisioned storage regardless of whether it is being used. - Overutilized volumes: An overutilized volume occurs when the storage demands on a particular EBS volume constantly exceed its performance capabilities. In other words, the volume is consumed to its maximum capacity, resulting in performance bottlenecks.

Although overutilization has no direct costs, the performance impact on your application might require upgrading to a more expensive (and higher-performing) EBS volume type. - Over-provisioned volumes: An over-provisioned volume occurs when more storage is allocated to an EBS volume than an application or instance needs. Too much storage capacity increases costs since you are not utilizing it effectively.

Optimizing storage often becomes complex due to the limited scope of Cloud Service Providers' (CSPs) storage features. Meeting storage needs frequently involves developing custom tools, significantly increasing DevOps efforts and time.

Relying solely on CSP tools might lead to manual, resource-heavy processes unsuitable for routine tasks.

Confronted with this challenge, many organizations compromise by over-provisioning. The primary driver behind this choice is the need for uninterrupted application uptime, as disruptions can severely impact daily operations.

Businesses lacking comprehensive tools and dealing with resource-intensive solutions tend to prioritize stability over-optimizing resources. As a result, they adopt over-provisioning as a practical but imperfect solution.

However, overprovisioning significantly impacts the overall AWS bill in the following ways:

- Over-provisioning EBS volumes can result in unnecessary costs and inflated AWS bills, especially at scale, which is a substantial burden.

- The amount of storage resources available through AWS is limited, and overprovisioning EBS volumes consumes more than needed. This may lead to an inefficient use of storage resources.

- Having too much capacity may not necessarily improve performance. It may sometimes create inefficiencies since the system is forced to manage a larger storage volume.

Moreover, AWS does not offer native shrink features for many reasons, such as emphasis on elasticity, complexity, variability, and the performance implications associated with EBS. This necessitates the urgency of finding a way to shrink EBS volume.

These issues make AWS EBS volume shrinkage a necessity.

What is AWS EBS Volume Shrinking & Why Is It Necessary?

Shrinking an AWS EBS volume presents a challenge due to the absence of a direct method for reduction, unlike its dynamic expansion counterpart.

While expanding EBS volumes is necessary to keep up with growing data demands, shrinking volumes down as data demands change is also an important element.

For instance, if an AWS EBS volume holds transient data like log files or cache, reclaiming storage over time by shrinking the volume becomes beneficial.

Several reasons underscore the need for AWS EBS volume shrinkage:

- Cost optimization: Overprovisioning larger AWS EBS volumes to accommodate anticipated growth often leads to unnecessary costs. Cost optimization drives the necessity for volume shrinkage, as a significant portion of cloud bills is attributed to overprovisioned storage resources. Reducing volumes to actual storage needs helps curb expenses.

- Resource efficiency: Reduced EBS volumes require fewer resources, improving resource utilization for resource-constrained environments.

- Flexibility: Shrinkage offers adaptability in managing storage resources, aligning them more effectively with evolving workloads.

- Data cleanup: You can reduce the size of your EBS volume and eliminate redundant or obsolete data by shrinking the volume. This allows you to streamline your storage infrastructure and clean up your storage.

Wondering what are the challenges associated with AWS EBS volume shrinkage?

AWS doesn't offer direct support for live EBS volume shrinkage, necessitating workarounds that involve multiple steps and tools.

Not only does the manual intervention lead to a cumbersome process but the reliance on different techniques elongates the AWS EBS volume shrinkage process and requires additional resources.

Moreover, the preparation and execution of the shrinkage process might demand pausing certain services, leading to temporary disruption and downtime.

How?

When shrinking an EBS volume, resizing the file system to make it smaller is essential. This often requires unmounting or taking the file system offline temporarily.

As a result, applications relying on the data stored in that file system may face downtime during this operation.

In certain situations, shrinking an AWS EBS volume would require an offline resizing operation. In those cases, the volume is briefly taken offline for resizing. This might lead to downtime since the volume can not be actively used when the data is moved and resized.

Additionally, it is advisable to create a backup of volume data before initiating a manual shrink operation to prevent data loss. However, this backup process might also result in downtime, especially if a consistent file system snapshot is required.

Step-By-Step Guide To Shrinking An AWS EBS Volume

Before delving into the steps for reducing the size of an EBS volume in AWS, it's important to clarify that there is no straightforward, built-in way for EBS volumes to be shrunk directly from the AWS console. It is, therefore, necessary to create a new volume and transfer the existing data to it as part of the process.

Preparing For EBS Volume Shrinkage

Preparing for EBS volume shrinkage requires careful consideration:

- Backup Critical Data: Ensure you have a backup of essential data before initiating the shrinkage process. This step is crucial to prevent data loss during volume operations.

- IAM Permissions: Verify that your AWS Identity and Access Management (IAM) user possesses the necessary permissions to perform volume-related actions and create new volumes.

- Document Configuration: Record vital configuration details such as volume size, instance type, attached block devices, and mount points. This documentation serves as a reference for the shrinkage process and future replication needs.

- Assess Impact: Understand the potential impact of volume shrinkage on application performance and available storage capacity. Anticipate possible downtime or disruptions during the shrinkage process and plan accordingly.

By thoroughly preparing and documenting these aspects, you'll be better equipped to navigate the volume shrinkage process and mitigate potential issues that may arise during the operation.

Checking For Volume Eligibility

Ensuring your EBS volume is eligible for resizing is critical:

- Verification Process: AWS doesn't universally support resizing all EBS volume types or setups. To confirm if your volume is eligible, consult AWS documentation or reach out to AWS support for clarification.

- Risks of Ineligibility: Attempting to shrink an ineligible volume might result in incorrect resizing, issues during the process, and potential problems post-shrinkage. This includes the risk of unplanned downtime, service interruptions, and even data loss.

Checking your volume's eligibility beforehand is pivotal to avoid complications and maintain service continuity while executing a volume shrinkage procedure.

Creating A Snapshot Of The Existing Volume

A snapshot is a point-in-time image of an EBS volume that captures all its data, settings, and configurations as they are. Creating a snapshot as a precautionary measure before adjusting an EBS volume is indeed a wise step.

That’s because this snapshot is a safety net in case of data loss or errors during shrinkage. If an unexpected issue or corruption occurs, you can restore your data from the snapshot:

- Locate EBS Volumes: Access your EBS volumes through the EC2 dashboard.

- Select Volume: Right-click on the specific volume you intend to snapshot.

- Initiate Snapshot: From the menu, select "Create Snapshot."

- Description: Provide a descriptive name, like "snapshot-my-volume," in the "Description" field of the snapshot creation form.

- Identification: Consider adding a key-value pair for identification, setting "Name" as the key and "snapshot-my-volume" as the value.

- Snapshot Creation: Confirm and initiate the snapshot creation by clicking the "Create Snapshot" button.

This snapshot serves as a safeguard, enabling you to restore data from a specific point if any unexpected issues or data loss occur during the shrinkage process.

Creating A Smaller Volume From The Snapshot

Stop the EC2 instance and create a new EBS volume using the data obtained from snapshots with desired storage in the same availability zone. Follow the steps mentioned below.

- Stop EC2 Instance: Ensure your EC2 instance is stopped to prevent data inconsistencies during volume creation.

- Access AWS Management Console: Log in to AWS and navigate to the Elastic Block Store (EBS) section under the Volumes tab.

- Start Volume Creation: Click on "Create Volume" to initiate the creation of a new EBS volume.

- Volume Configuration: Choose the same volume type as your previous volume to maintain compatibility and performance and specify the desired volume size (e.g., 30 GB) in the size field.

- Select Availability Zone: Ensure the new volume is in the same availability zone as your EC2 instance for seamless accessibility.

- Tagging for Identification: Add a tag using the key "Name" and value "new-volume." Tags help quickly identify and organize stored data and maintain a structured approach.

These steps ensure you create a new EBS volume from the snapshot with the desired size and configurations in the appropriate availability zone.

Attaching The New Volume To The Instance

- Navigate and right-click on the new volume.

- Click Attach Volume

- Choose the instance name and then click Attach.

- Now, you can start the instance and log in to SSH.

- Format the new volume.

- To determine if the volume contains any data, use the following command: sudo file -s//dev/xvdf

- If the output is "//dev/xvdf: data," empty means it can be formatted.

- Seeing another kind of output implies that the volume contains information, hence, avoid formatting.

- If the volume is confirmed to be empty, you can format it with the following command:

sudo mkfs -t ext4//dev/xvdf

- With this command, the volume shall be formatted into an ext4 filesystem, ready to use.

Follow these steps religiously to ascertain whether there is any data in the volume before formatting it should the volume be empty.

Mount New Volume

- First, create a directory where you'll mount the new volume using the following command:

sudo mkdir /mnt/new-volume

- Next, mount the new volume into the directory you just created with the following command: sudo mount //xvdf /mnt/new-volume

- To confirm that the new volume is successfully mounted, you can check the available disk space using the following command: df -h

They will indicate that a new volume be inserted into the file listing as mounted filesystems.

Copy Data From The Old Volume To The New Volume

To transfer data from the old volume to the new volume, you can use the rsync command with the following syntax: rsync -axv / mnt/new-volume

Allow your laptop to complete the data transfer exercise at its own pace without interruptions. This may depend on how much data is being transferred.

Prepare New Volume

- To install GRUB on the new volume, use the following command: grub-install –root-directory=/mnt/new-volume/, force /dev/xvdf

- After installing GRUB, unmount the new volume using the following command: sudo umount /mnt/new-volume

- Verify the UUIDs of your devices using the blkid command

- Take down the UUID representing /dev/xcda1 for reference because you may need it later on.

- Replace the UUID on /dev/xvdf using the tune2fs command with the copy of the UUID from the old /dev/hda1.

sudo tune2fs -U COPIED_UUID /dev/xvdf

- Copy and paste the UUID you copied earlier instead of "COPIED_UUID."

- To determine the system label of the old volume (/dev/xvda1), use the following command:

sudo e2label /dev/xvda1

It will show a string such as "cloudimg-rootfs."

- Set the same system label on the new volume (/dev/xvdf) as the old volume using the following command:

sudo e2label /dev/xvdf cloudimg-rootfs

- Update your configuration and labeling and safely logout to the SSH session.

Doing so ensures that the new volume boots GRUB with the correct UUID and the same system label as the old volume, which would be essential concerning system functionality and consistency.

Verifying The Success Of The Shrinkage Process

The verification of the success of the shrinkage process for the EBS (Elastic Block Store) in AWS includes verifying different features to guarantee the successful completion of the operation.

- Go to EC2 service and click on the instance.

- Find and select the instance in which the EBS volume is attached to the EC2 dashboard.

- The attached EBS volume will be displayed under instance details in the "Description" tab.

- Ensure that the EBS volume is smaller than what was initially expected.

- Make sure your EC2 instance is up and working. To ascertain whether it is running, restart it if the instance was stopped during the volume-shrinking process.

- Log into your EC2 instance through SSH or otherwise.

- Check the filesystem size and available space with the df -h command. Ensure the filesystem size equals the new volume's size with no lost data or file corruption.

- When checking your applications and data, ensure no data loss, corruption, or other effect on a system's functionality. Ensure that all your applications are running properly.

- Make sure to have CloudWatch alarms or any other suitable monitoring solution in place for tracking the health of the EBS volume. Set up alarms that will notify you whenever there are problems after shrinking.

- Inspect the system logs and error messages of the EC2 instance and AWS CloudWatch for volume shrinkage-related problems.

- Perform tests for your applications and use cases to ensure they work well after reducing volume. Identify the problems, discrepancies, and deteriorations in performance.

There's no denying that the above steps indeed demand considerable time and resources, impacting the overall productivity and potentially affecting revenue generation for an organization.

The intricacies involved in each stage, from eligibility checks to data migration, coupled with the need to navigate various tools, make it labor-intensive and error-prone.

Scaling such manual procedures across a vast environment becomes impractical and risky, risking system downtime that can significantly impact financial performance.

However, AWS doesn't provide a native live shrinkage feature. This is where Lucidity's solution comes into play. Their tools and services aim to streamline and automate the EBS shrinkage as well as expansion process, offering a more efficient, less error-prone, and less time-consuming alternative to the current manual procedures.

This saves time and resources and reduces the risk of downtime and errors associated with complex manual tasks.

Lucidity For Automated EBS Volume Management

While the steps above work to functionally shrink AWS EBS volumes by creating a smaller volume and migrating over, the process is long and requires downtime.

Owing to the complexities associated with the process, the probability of data loss, and other such reasons, this process of EBS shrinking often goes undone in enterprise environments.

Several other reasons that necessitate a better process for AWS EBS shrinkage are:

- Several complex steps are involved in resizing an Elastic Block Store (EBS) volume. A snapshot is created, a reduced-size volume is generated, and data is restored to the newly created volume. Each of these actions requires meticulous configuration and validation.

- Inadvertent deletions of critical data or incorrect configurations can result in irreversible data loss when EBS volumes are shrunk manually.

- Often, shrinking EBS volumes requires the instance that uses the volume to decrease, impacting its availability, especially for mission-critical applications.

- A snapshot is created by restoring a new volume to the previous volume. It adds more steps and complexity to the process. It can also take a long time, especially if the volume has a lot of data.

- To ensure a successful shrinkage process, you may need to perform thorough testing and validation, which can require expertise.

The manual process of shrinking AWS EBS volumes doesn't just demand profound expertise and add complexity; it also comes with substantial cost implications:

- Downtime cost: There may be a period of downtime during the shrinking process. This may impact the availability of your application and, depending on your use case, which may result in financial losses.

Moreover, the process of resizing larger EBS volumes takes a long time. Reducing substantial EBS volumes typically takes longer than reducing smaller volumes. End users may experience prolonged downtime and longer wait times during this prolonged operation. - Inefficiency DevOps time: Manual EBS severely impacts the DevOps team's time and efforts. When shrinking storage resources manually in EBS, you'll need to create snapshots, create a new, smaller volume, and migrate the data from the old volume to the new one.

This process can take a lot of time, especially if the data is large.

Data loss or service interruptions can occur if any step in a manual process is missed, such as creating a snapshot, detaching a volume, or migrating data.

Moreover, the shrinkage process of EBS volumes may require coordination with several teams, including system administrators, database administrators, and developers.

Coordination across teams can add complexity and increase overall shrinkage time.

Given the drawbacks of manual EBS shrinkage, there's a growing need for a simpler and more efficient process to mitigate overprovisioning's impact on an organization's financial health.

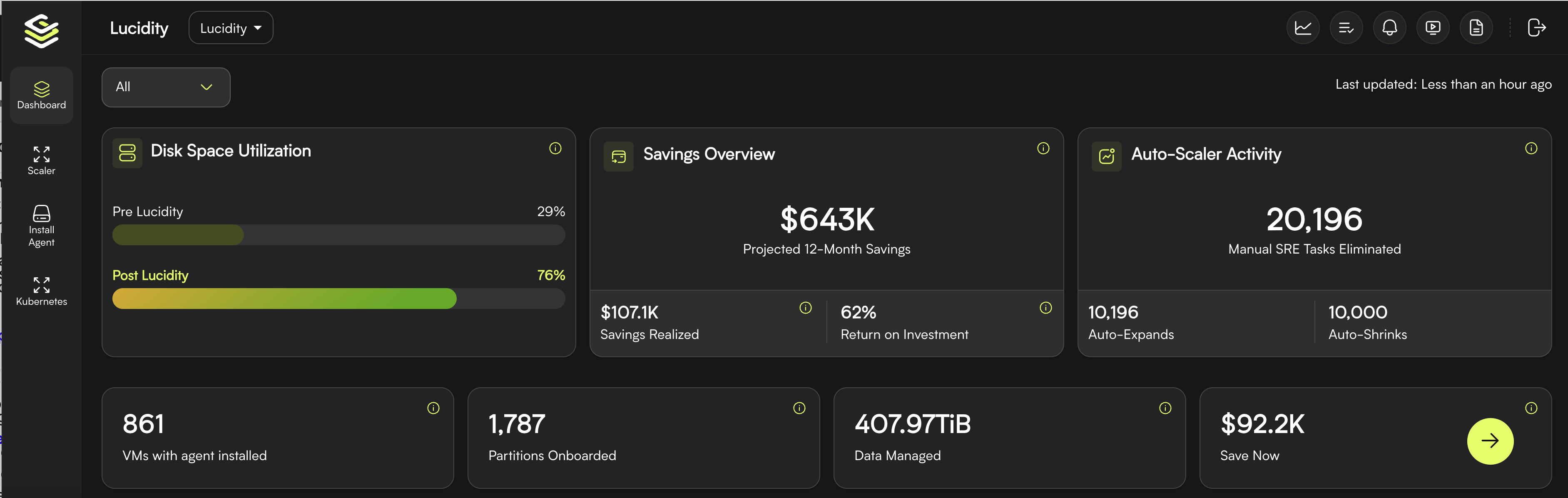

Recognizing this need, we've developed a live block storage AutoScaler at Lucidity—an autonomous solution designed to simplify cloud storage management.

We offer the industry's first autonomous storage orchestration solution, which ensures that your block storage is rightsized continuously without you having to lift a finger.

Mounted atop your block storage and cloud service provider, our EBS AutoScaler frees you from the hassle of overprovisioning and underprovisioning since it offers seamless expansion and shrinkage of storage resources without any downtime, buffer time or performance issues.

Lucidity's EBS AutoScaler works towards providing a NoOps experience and offer the following benefits.

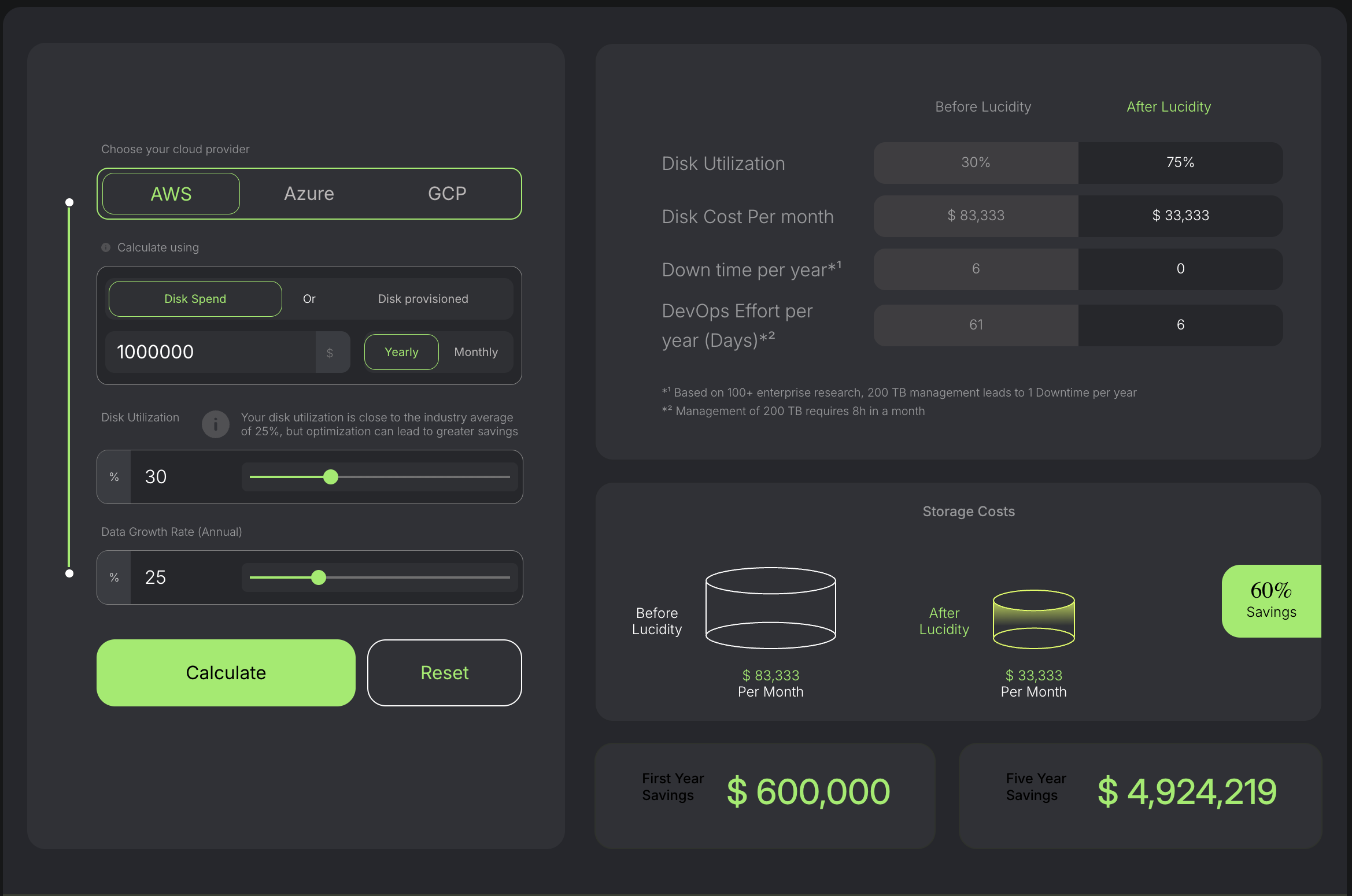

- Up to 70% reduction in storage cost: By automating the EBS shrinkage and expansion process, you save a whopping 70% on the storage cost and gain exponential growth in disk utilization from 35% to 80%. With Lucidity installed in your system, you can rest assured that you no longer have to pay for underutilized or idle resources.

Utilizing our ROI Calculator, you can calculate how much Lucidity can save your organization. Enter critical details such as disk expenditures, utilization metrics, and annual growth. Lucidity's cost reduction calculator will provide a comprehensive estimate of possible savings, offering insight into potential savings.

- Automated expansion and shrinkage: The aforementioned method has implementation challenges and drawbacks, which can impact productivity by causing significant downtime. This is where Lucidty’s EBS Auto-scaler comes to your rescue. You can rely on our EBS Auto-Scaler to automatically adjust capacity when demand spikes or usage falls below optimal levels.

- No downtime: In conventional resource provisioning, the DevOps team is required to navigate through three separate tools, which not only leads to substantial downtime but also hinders overall productivity.

However, Lucidity offers an alternative. Our automated resizing that takes place within minutes of your request guarantees that your storage needs are always met, eliminating manual intervention and minimizing disruptions.

Lucidity offers customizable policies to ensure smooth operation and maximum efficiency. With the ability to set utilization thresholds, minimum disk requirements, and buffer sizes, Lucidity effortlessly manages instances according to your preferences.

It's worth noting that you can create unlimited policies with Lucidity, allowing for precise adjustment of storage resources as your needs evolve.

Will the continuous process impact your daily operations?

You have nothing to worry about since Lucidity is designed only to consume 2% of the RAM and CPU usage. This will ensure that your workload is never disturbed.

How do we go about the process?

Before deploying anything, customers usually run a free, 15 minute Lucidity Assessment to gain a profound understanding of their disk health, focusing on disk utilization, downtime risks, and wastage.

With Lucidity Assessment, clients are provided with a seamless and user-friendly experience for creating and validating their own business cases in an easy-to-use manner.

Assessment can be conducted in just a few clicks, providing clients with valuable insights into their storage utilization and optimizing their cloud resources.

Once we help you figure out the reasons behind overprovisioning and excessive spending, which could be due to underutilized or idle resources, you can easily and direclty install the EBS AutoScaler, which autonomously shrinks and expands your cloud block storage resources based on real-time data requirements.

How did we help Bobble AI save on DevOps efforts and ensure efficiency EBS management?

Bobble AI, a dynamic technology company, relied on AWS Auto Scaling Groups for scalability but encountered challenges in managing Elastic Block Storage (EBS).

This led to cost overruns and operational complexities. Seeking a solution, they approached our team to enhance their AWS infrastructure.

The issue originated from managing Elastic Block Storage (EBS) volumes within Bobble's system. Initially set at 100GB in Amazon Machine Image (AMI), new instances inherited this fixed size regardless of usage.

Resizing these volumes involved creating new AMIs, scaling volumes, and refreshing the cycle, causing significant time delays.

One critical challenge was volumes reverting to their original size every 24 hours during instance refresh cycles, complicating daily system upgrades.

Lucidity addressed this by integrating its Autoscaler agent into Bobble's AMI. This streamlined ASG deployment by seamlessly incorporating Lucidity Autoscaler into newly spawned EBS volumes through Bobble's launch template.

This enabled automatic scaling of volumes based on workload, maintaining a consistent utilization of 70-80%.

Lucidity's implementation streamlined the tedious task of resizing EBS volumes within the ASG at Bobble. Previously, the process involved coding, AMI creation, and full cycle refreshes.

With Lucidity, this cumbersome procedure was eliminated. Now, Bobble enjoys a simplified, low-touch system as Lucidity efficiently handles EBS volume provisioning. This streamlined process effortlessly scales to manage over 600 monthly instances at Bobble.

Optimize AWS EBS Volume With Effective Shrinking

Reducing EBS volumes to align with an organization's storage needs is crucial to minimize unnecessary expenses and maintain system efficiency.

Automation stands out as a reliable, repeatable, and efficient solution for this task. Automating the shrinkage process minimizes errors, ensuring consistent execution and streamlining operations.

In the dynamic realm of cloud environments, automation for EBS volume shrinkage serves as both a cost-saving strategy and a method to enhance operational agility and resource management.

Let Lucidity take charge of managing your fluctuating storage needs. Book a demo today to discover how our solution ensures seamless AWS EBS volume shrinkage.

Frequently Asked Questions: Shrinking AWS EBS Volumes

Q1. Can you shrink an EBS volume in AWS natively?

No. AWS does not support shrinking EBS volumes natively. You can only grow an EBS volume through modify-volume API calls. To reduce an EBS volume, you must create a snapshot, provision a smaller volume from it, migrate the data, and detach the original, which introduces downtime and operational risk.

Q2. Why would I need to shrink an EBS volume?

You shrink EBS volumes to cut storage waste. Enterprise teams typically use only 30 percent of the block storage they provision. Overprovisioning costs 40 to 70 percent more than right-sized storage, compounds every quarter, and is often the largest untouched line item in an AWS bill.

Q3. What is the manual process for shrinking an EBS volume?

Take a snapshot, create a smaller volume from the snapshot or a fresh volume at the target size, attach it to the instance, migrate the filesystem data (rsync, dd, or tar), verify integrity, unmount the old volume, remount the new one, and detach and delete the old volume. Plan for maintenance window downtime and rollback readiness.

Q4. Can I shrink an EBS volume without downtime?

The native AWS process requires downtime for the filesystem migration step. Lucidity AutoScaler autonomously rightsizes overprovisioned EBS volumes without downtime and without service disruption, using scoped, targeted actions on specific volumes flagged by the Lucidity Assessment.

Q5. Does shrinking an EBS volume reduce AWS costs?

Yes. EBS is billed per provisioned GB-month regardless of utilization, so reducing provisioned capacity reduces your bill proportionally. A 40 percent reduction in provisioned EBS capacity reduces the EBS portion of your bill by 40 percent. Customers using Lucidity AutoScaler typically save 40 to 70 percent on block storage.

Q6. Can I automate EBS volume shrinkage?

AWS does not provide native automation for shrinking. You can script the manual process with CloudFormation or Lambda, but you still carry the downtime and rollback risk. Lucidity AutoScaler autonomously rightsizes overprovisioned EBS volumes continuously, with scoped permissions and pen-tested security.

Q7. What are the risks of manually shrinking EBS volumes?

The primary risks are data loss during filesystem migration, snapshot consistency issues on live databases, extended downtime if rollback is needed, and alarm or monitoring breakage when volumes are swapped. The risk profile is why most teams overprovision rather than shrink manually.

Q8. How do I identify which EBS volumes should be shrunk?

Look for volumes with sustained utilization below 50 percent over 30 days. CloudWatch VolumeReadBytes, VolumeWriteBytes, and disk utilization metrics surface the candidates. The Lucidity Assessment produces a full list of overprovisioned EBS volumes and projected savings in 15 minutes, agentless and read-only.

Originally published March 11, 2024

Table of Contents

Sign Up

Ankur Mandal